The primary time Teodor Grantcharov sat down to observe himself carry out surgical procedure, he needed to throw the VHS tape out the window.

“My notion was that my efficiency was spectacular,” Grantcharov says, after which pauses—“till the second I noticed the video.” Reflecting on this operation from 25 years in the past, he remembers the roughness of his dissection, the incorrect devices used, the inefficiencies that reworked a 30-minute operation right into a 90-minute one. “I didn’t need anybody to see it.”

This response wasn’t precisely distinctive. The working room has lengthy been outlined by its hush-hush nature—what occurs within the OR stays within the OR—as a result of surgeons are notoriously dangerous at acknowledging their very own errors. Grantcharov jokes that if you ask “Who’re the highest three surgeons on the earth?” a typical surgeon “all the time has a problem figuring out who the opposite two are.”

However after the preliminary humiliation over watching himself work, Grantcharov began to see the worth in recording his operations. “There are such a lot of small particulars that usually take years and years of follow to comprehend—that some surgeons by no means get to that time,” he says. “Instantly, I might see all these insights and alternatives in a single day.”

There was a giant drawback, although: it was the ’90s, and spending hours taking part in again grainy VHS recordings wasn’t a practical high quality enchancment technique. It will have been practically inconceivable to find out how usually his comparatively mundane slipups occurred at scale—to not point out extra severe medical errors like people who kill some 22,000 People every year. Many of those errors occur on the working desk, from leaving surgical sponges inside sufferers’ our bodies to performing the incorrect process altogether.

Whereas the affected person security motion has pushed for uniform checklists and different guide fail-safes to forestall such errors, Grantcharov believes that “so long as the one barrier between success and failure is a human, there shall be errors.” Enhancing security and surgical effectivity turned one thing of a private obsession. He needed to make it difficult to make errors, and he thought creating the proper system to create and analyze recordings could possibly be the important thing.

It’s taken a few years, however Grantcharov, now a professor of surgical procedure at Stanford, believes he’s lastly developed the know-how to make this dream attainable: the working room equal of an airplane’s black field. It data every part within the OR by way of panoramic cameras, microphones, and anesthesia displays earlier than utilizing synthetic intelligence to assist surgeons make sense of the information.

Grantcharov’s firm, Surgical Security Applied sciences, will not be the one one deploying AI to investigate surgical procedures. Many medical machine corporations are already within the area—from Medtronic with its Contact Surgical procedure platform, Johnson & Johnson with C-SATS, and Intuitive Surgical with Case Insights.

However most of those are targeted solely on what’s taking place inside sufferers’ our bodies, capturing intraoperative video alone. Grantcharov desires to seize the OR as a complete, from the variety of occasions the door is opened to what number of non-case-related conversations happen throughout an operation. “Folks have simplified surgical procedure to technical abilities solely,” he says. “It’s essential research the OR setting holistically.”

Success, nonetheless, isn’t so simple as simply having the proper know-how. The concept of recording every part presents a slew of difficult questions round privateness and will elevate the specter of disciplinary motion and authorized publicity. Due to these considerations, some surgeons have refused to function when the black bins are in place, and among the programs have even been sabotaged. Apart from these issues, some hospitals don’t know what to do with all this new knowledge or the way to keep away from drowning in a deluge of statistics.

Grantcharov however predicts that his system can do for the OR what black bins did for aviation. In 1970, the trade was tormented by 6.5 deadly accidents for each million flights; right now, that’s all the way down to lower than 0.5. “The aviation trade made the transition from reactive to proactive due to knowledge,” he says—“from secure to ultra-safe.”

Grantcharov’s black bins are actually deployed at virtually 40 establishments within the US, Canada, and Western Europe, from Mount Sinai to Duke to the Mayo Clinic. However are hospitals on the cusp of a brand new period of security—or creating an setting of confusion and paranoia?

Shaking off the secrecy

The working room might be probably the most measured place within the hospital but additionally one of the vital poorly captured. From staff efficiency to instrument dealing with, there’s “loopy massive knowledge that we’re not even recording,” says Alexander Langerman, an ethicist and head and neck surgeon at Vanderbilt College Medical Heart. “As an alternative, we’ve submit hoc recollection by a surgeon.”

Certainly, when issues go incorrect, surgeons are purported to evaluate the case on the hospital’s weekly morbidity and mortality conferences, however these errors are notoriously underreported. And even when surgeons enter the required notes into sufferers’ digital medical data, “it’s undoubtedly—and I imply this within the least malicious approach attainable—dictated towards their greatest pursuits,” says Langerman. “It makes them look good.”

The working room wasn’t all the time so secretive.

Within the 19th century, operations usually happened in giant amphitheaters—they have been public spectacles with a normal value of admission. “Each seat even of the highest gallery was occupied,” recounted the belly surgeon Lawson Tait about an operation within the 1860s. “There have been most likely seven or eight hundred spectators.”

Nevertheless, across the 1900s, working rooms turned more and more smaller and fewer accessible to the general public—and its germs. “Instantly, there was a sense that one thing was lacking, that the general public surveillance was lacking. You couldn’t know what occurred within the smaller rooms,” says Thomas Schlich, a historian of medication at McGill College.

And it was practically inconceivable to return. Within the 1910s a Boston surgeon, Ernest Codman, advised a type of surveillance often known as the end-result system, documenting each operation (together with failures, issues, and errors) and monitoring affected person outcomes. Massachusetts Normal Hospital didn’t settle for it, says Schlich, and Codman resigned in frustration.

Such opacity was half of a bigger shift towards medication’s professionalization within the 20th century, characterised by technological developments, the decline of generalists, and the bureaucratization of health-care establishments. All of this put distance between sufferers and their physicians. Across the identical time, and notably from the 1960s onward, the medical discipline started to see an increase in malpractice lawsuits—a minimum of partially pushed by sufferers looking for solutions when issues went incorrect.

This battle over transparency might theoretically be addressed by surgical recordings. However Grantcharov realized in a short time that the one method to get surgeons to make use of the black field was to make them really feel protected. To that finish, he has designed the system to report the motion however cover the identification of each sufferers and workers, even deleting all recordings inside 30 days. His concept is that no particular person needs to be punished for making a mistake. “We need to know what occurred, and the way we are able to construct a system that makes it troublesome for this to occur,” Grantcharov says. Errors don’t happen as a result of “the surgeon wakes up within the morning and thinks, ‘I’m gonna make some catastrophic occasion occur,’” he provides. “This can be a system subject.”

AI that sees every part

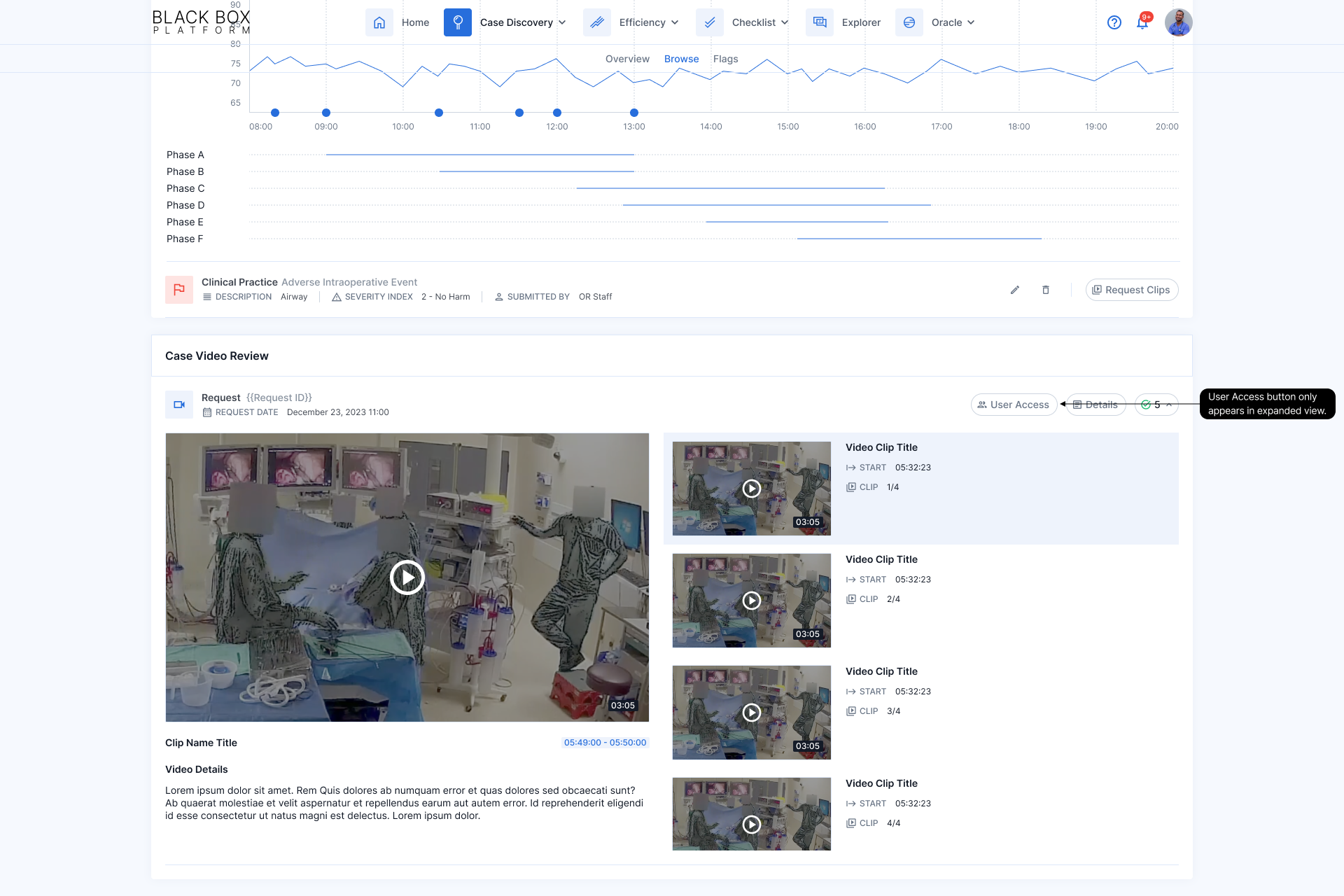

Grantcharov’s OR black field will not be really a field in any respect, however a pill, one or two ceiling microphones, and as much as 4 wall-mounted dome cameras that may reportedly analyze greater than half one million knowledge factors per day per OR. “In three days, we undergo your entire Netflix catalogue when it comes to video processing,” he says.

The black-box platform makes use of a handful of pc imaginative and prescient fashions and in the end spits out a sequence of quick video clips and a dashboard of statistics—like how a lot blood was misplaced, which devices have been used, and what number of auditory disruptions occurred. The system additionally identifies and breaks out key segments of the process (dissection, resection, and closure) in order that as a substitute of getting to observe a complete three- or four-hour recording, surgeons can leap to the a part of the operation the place, as an example, there was main bleeding or a surgical stapler misfired.

Critically, every individual within the recording is rendered nameless; an algorithm distorts folks’s voices and blurs out their faces, reworking them into shadowy, noir-like figures. “For one thing like this, privateness and confidentiality are vital,” says Grantcharov, who claims the anonymization course of is irreversible. “Despite the fact that what occurred, you may’t actually use it towards a person.”

One other AI mannequin works to guage efficiency. For now, that is executed primarily by measuring compliance with the surgical security guidelines—a questionnaire that’s purported to be verbally ticked off throughout each kind of surgical treatment. (This guidelines has lengthy been related to reductions in each surgical infections and general mortality.) Grantcharov’s staff is at the moment working to coach extra advanced algorithms to detect errors throughout laparoscopic surgical procedure, corresponding to utilizing extreme instrument pressure, holding them within the incorrect approach, or failing to take care of a transparent view of the surgical space. Nevertheless, assessing these efficiency metrics has proved harder than measuring guidelines compliance. “There are some issues which can be quantifiable, and a few issues require judgment,” Grantcharov says.

Every mannequin has taken as much as six months to coach, by a labor-intensive course of counting on a staff of 12 analysts in Toronto, the place the corporate was began. Whereas many normal AI fashions will be skilled by a gig employee who labels on a regular basis objects (like, say, chairs), the surgical fashions want knowledge annotated by individuals who know what they’re seeing—both surgeons, in specialised instances, or different labelers who’ve been correctly skilled. They’ve reviewed tons of, typically 1000’s, of hours of OR movies and manually famous which liquid is blood, as an example, or which device is a scalpel. Over time, the mannequin can “study” to establish bleeding or specific devices by itself, says Peter Grantcharov, Surgical Security Applied sciences’ vp of engineering, who’s Teodor Grantcharov’s son.

For the upcoming laparoscopic surgical procedure mannequin, surgeon annotators have additionally began to label whether or not sure maneuvers have been appropriate or mistaken, as outlined by the Generic Error Ranking Software—a standardized method to measure technical errors.

Whereas most algorithms function close to completely on their very own, Peter Grantcharov explains that the OR black field remains to be not absolutely autonomous. For instance, it’s troublesome to seize audio by ceiling mikes and thus get a dependable transcript to doc whether or not each ingredient of the surgical security guidelines was accomplished; he estimates that this algorithm has a 15% error price. So earlier than the output from every process is finalized, one of many Toronto analysts manually verifies adherence to the questionnaire. “It’s going to require a human within the loop,” Peter Grantcharov says, however he gauges that the AI mannequin has made the method of confirming guidelines compliance 80% to 90% extra environment friendly. He additionally emphasizes that the fashions are always being improved.

In all, the OR black field can value about $100,000 to put in, and analytics bills run $25,000 yearly, in accordance with Janet Donovan, an OR nurse who shared with MIT Expertise Evaluate an estimate given to workers at Brigham and Girls’s Faulkner Hospital in Massachusetts. (Peter Grantcharov declined to touch upon these numbers, writing in an electronic mail: “We don’t share particular pricing; nonetheless, we are able to say that it’s primarily based on the product combine and the overall variety of rooms, with inherent volume-based discounting constructed into our pricing fashions.”)

“Large brother is watching”

Lengthy Island Jewish Medical Heart in New York, a part of the Northwell Well being system, was the primary hospital to pilot OR black bins, again in February 2019. The rollout was removed from seamless, although not essentially due to the tech.

“Within the colorectal room, the cameras have been sabotaged,” recollects Northwell’s chair of urology, Louis Kavoussi—they have been circled and intentionally unplugged. In his personal OR, the workers fell silent whereas working, apprehensive they’d say the incorrect factor. “Until you’re taking a golf or tennis lesson, you don’t need somebody staring there watching every part you do,” says Kavoussi, who has since joined the scientific advisory board for Surgical Security Applied sciences.

Grantcharov’s guarantees about not utilizing the system to punish people have supplied little consolation to some OR workers. When two black bins have been put in at Faulkner Hospital in November 2023, they threw the division of surgical procedure into disaster. “Everyone was fairly freaked out about it,” says one surgical tech who requested to not be recognized by identify since she wasn’t approved to talk publicly. “We have been being watched, and we felt like if we did one thing incorrect, our jobs have been going to be on the road.”

It wasn’t that she was doing something unlawful or spewing hate speech; she simply needed to joke along with her pals, complain in regards to the boss, and be herself with out the concern of directors peeking over her shoulder. “You’re very conscious that you just’re being watched; it’s not delicate in any respect,” she says. The early days have been notably difficult, with surgeons refusing to work within the black-box-equipped rooms and OR workers boycotting these operations: “It was positively a struggle each morning.”

“Within the colorectal room, the cameras have been sabotaged,” recollects Louis Kavoussi. “Until you’re taking a golf or tennis lesson, you don’t need somebody staring there watching every part you do.”

At some degree, the identification protections are solely half measures. Earlier than 30-day-old recordings are mechanically deleted, Grantcharov acknowledges, hospital directors can nonetheless see the OR quantity, the time of operation, and the affected person’s medical report quantity, so even when OR personnel are technically de-identified, they aren’t actually nameless. The result’s a way that “Large Brother is watching,” says Christopher Mantyh, vice chair of medical operations at Duke College Hospital, which has black bins in seven ORs. He’ll draw on combination knowledge to speak typically about high quality enchancment at departmental conferences, however when particular points come up, like breaks in sterility or a cluster of infections, he’ll look to the recordings and “go to the surgeons straight.”

In some ways, that’s what worries Donovan, the Faulkner Hospital nurse. She’s not satisfied the hospital will defend workers members’ identities and is apprehensive that these recordings shall be used towards them—whether or not by inner disciplinary actions or in a affected person’s malpractice swimsuit. In February 2023, she and virtually 60 others despatched a letter to the hospital’s chief of surgical procedure objecting to the black field. She’s since filed a grievance with the state, with arbitration proceedings scheduled for October.

The authorized considerations particularly loom giant as a result of, already, over 75% of surgeons report having been sued a minimum of as soon as, in accordance with a 2021 survey by Medscape, an internet useful resource hub for health-care professionals. To the layperson, any surgical video “seems like a freaking horror present,” says Vanderbilt’s Langerman. “Some plaintiff’s legal professional goes to get ahold of this, after which some jury goes to see a complete bunch of blood, after which they’re not going to know what they’re seeing.” That prospect turns each recording into a possible authorized battle.

From a purely logistical perspective, nonetheless, the 30-day deletion coverage will seemingly insulate these recordings from malpractice lawsuits, in accordance with Teneille Brown, a legislation professor on the College of Utah. She notes that inside that timeframe, it could be practically inconceivable for a affected person to seek out authorized illustration, undergo the requisite conflict-of-interest checks, after which file a discovery request for the black-box knowledge. Whereas deleting knowledge to bypass the judicial system might provoke criticism, Brown sees the knowledge of Surgical Security Applied sciences’ strategy. “If I have been their lawyer, I might inform them to only have a coverage of deleting it as a result of then they’re deleting the nice and the dangerous,” she says. “What it does is orient the main focus to say, ‘This isn’t a couple of public-facing viewers. The viewers for these movies is totally inner.’”

A knowledge deluge

On the subject of enhancing high quality, there are “the problem-first folks, after which there are the data-first folks,” says Justin Dimick, chair of the division of surgical procedure on the College of Michigan. The latter, he says, push “large knowledge assortment” with out first figuring out “a query of ‘What am I attempting to repair?’” He says that’s why he at the moment has no plans to make use of the OR black bins in his hospital.

Mount Sinai’s chief of normal surgical procedure, Celia Divino, echoes this sentiment, emphasizing that an excessive amount of knowledge will be paralyzing. “How do you interpret it? What do you do with it?” she asks. “That is all the time a illness.”

At Northwell, even Kavoussi admits that 5 years of information from OR black bins hasn’t been used to vary a lot, if something. He says that hospital management is lastly starting to consider the way to use the recordings, however a tough query stays: OR black bins can gather boatloads of information, however what does it matter if no person is aware of what to do with it?

Grantcharov acknowledges that the knowledge will be overwhelming. “Within the early days, we let the hospitals work out the way to use the information,” he says. “That led to a giant variation in how the information was operationalized. Some hospitals did wonderful issues; others underutilized it.” Now the corporate has a devoted “buyer success” staff to assist hospitals make sense of the information, and it affords a consulting-type service to work by surgical errors. However in the end, even probably the most sensible insights are meaningless with out buy-in from hospital management, Grantcharov suggests.

Getting that buy-in has proved troublesome in some facilities, a minimum of partly as a result of there haven’t but been any giant, peer-reviewed research exhibiting how OR black bins really assist to scale back affected person issues and save lives. “If there’s some proof {that a} complete knowledge assortment system—like a black field—is beneficial, then we’ll do it,” says Dimick. “However I haven’t seen that proof but.”

One of the best arduous knowledge so far is from a 2022 research printed within the Annals of Surgical procedure, during which Grantcharov and his staff used OR black bins to point out that the surgical guidelines had not been adopted in a fifth of operations, seemingly contributing to extra infections. He additionally says that an upcoming research, scheduled to be printed this fall, will present that the OR black field led to an enchancment in guidelines compliance and lowered ICU stays, reoperations, hospital readmissions, and mortality.

On a smaller scale, Grantcharov insists that he has constructed a gradual stream of proof exhibiting the facility of his platform. For instance, he says, it’s revealed that auditory disruptions—doorways opening, machine alarms and private pagers going off—occur each minute in gynecology ORs, {that a} median 20 intraoperative errors are made in every laparoscopic surgical procedure case, and that surgeons are nice at situational consciousness and management whereas nurses excel at process administration.

In the meantime, some hospitals have reported small enhancements primarily based on black-box knowledge. Duke’s Mantyh says he’s used the information to test how usually antibiotics are given on time. Duke and different hospitals additionally report turning to this knowledge to assist lower the period of time ORs sit empty between instances. By flagging when “idle” occasions are unexpectedly lengthy and having the Toronto analysts evaluate recordings to elucidate why, they’ve turned up points starting from inefficient communication to extreme time spent bringing in new tools.

That may make a much bigger distinction than one may assume, explains Ra’gan Laventon, medical director of perioperative providers at Texas’s Memorial Hermann Sugar Land Hospital: “We have now a number of sufferers who’re relying on us to get to their care right now. And so the extra time that’s added in a few of these operational efficiencies, the extra impactful it’s to the affected person.”

The actual world

At Northwell, the place among the cameras have been initially sabotaged, it took a few weeks for Kavoussi’s urology staff to get used to the black bins, and about six months for his colorectal colleagues. A lot of the answer got here all the way down to one-on-one conversations during which Kavoussi defined how the information was mechanically de-identified and deleted.

Throughout his operations, Kavoussi would additionally attempt to defuse the strain, telling the OR black field “Good morning, Toronto,” or jokingly asking, “How’s the climate up there?” Ultimately, “since nothing dangerous has occurred, it has turn out to be a part of the conventional movement,” he says.

The fact is that no surgeon desires to be a mean operator, “however statistically, we’re largely common surgeons, and that’s okay,” says Vanderbilt’s Langerman. “I’d hate to be a below-average surgeon, but when I used to be, I’d actually need to learn about it.” Like athletes watching recreation movie to organize for his or her subsequent match, surgeons may someday evaluate their recordings, assessing their errors and occupied with the very best methods to keep away from them—however provided that they really feel secure sufficient to take action.

“Till we all know the place the guardrails are round this, there’s such a danger—an unsure danger—that nobody’s gonna freaking let anybody activate the digicam,” Langerman says. “We reside in an actual world, not an ideal world.”

Simar Bajaj is an award-winning science journalist and 2024 Marshall Scholar. He has beforehand written for the Washington Submit, Time journal, the Guardian, NPR, and the Atlantic, in addition to the New England Journal of Medication, Nature Medication, and The Lancet. He received Science Story of the Yr from the Overseas Press Affiliation in 2022 and the highest prize for excellence in science communications from the Nationwide Academies of Science, Engineering, and Medication in 2023. Observe him on X at @SimarSBajaj.