I’m confused and working late, as a result of what do you put on for the remainder of eternity?

This makes it sound like I’m dying, nevertheless it’s the alternative. I’m, in a approach, about to stay eternally, due to the AI video startup Synthesia. For the previous a number of years, the corporate has produced AI-generated avatars, however at present it launches a brand new era, its first to benefit from the most recent developments in generative AI, and they’re extra lifelike and expressive than something I’ve ever seen. Whereas at present’s launch means nearly anybody will now have the ability to make a digital double, on this early April afternoon, earlier than the know-how goes public, they’ve agreed to make considered one of me.

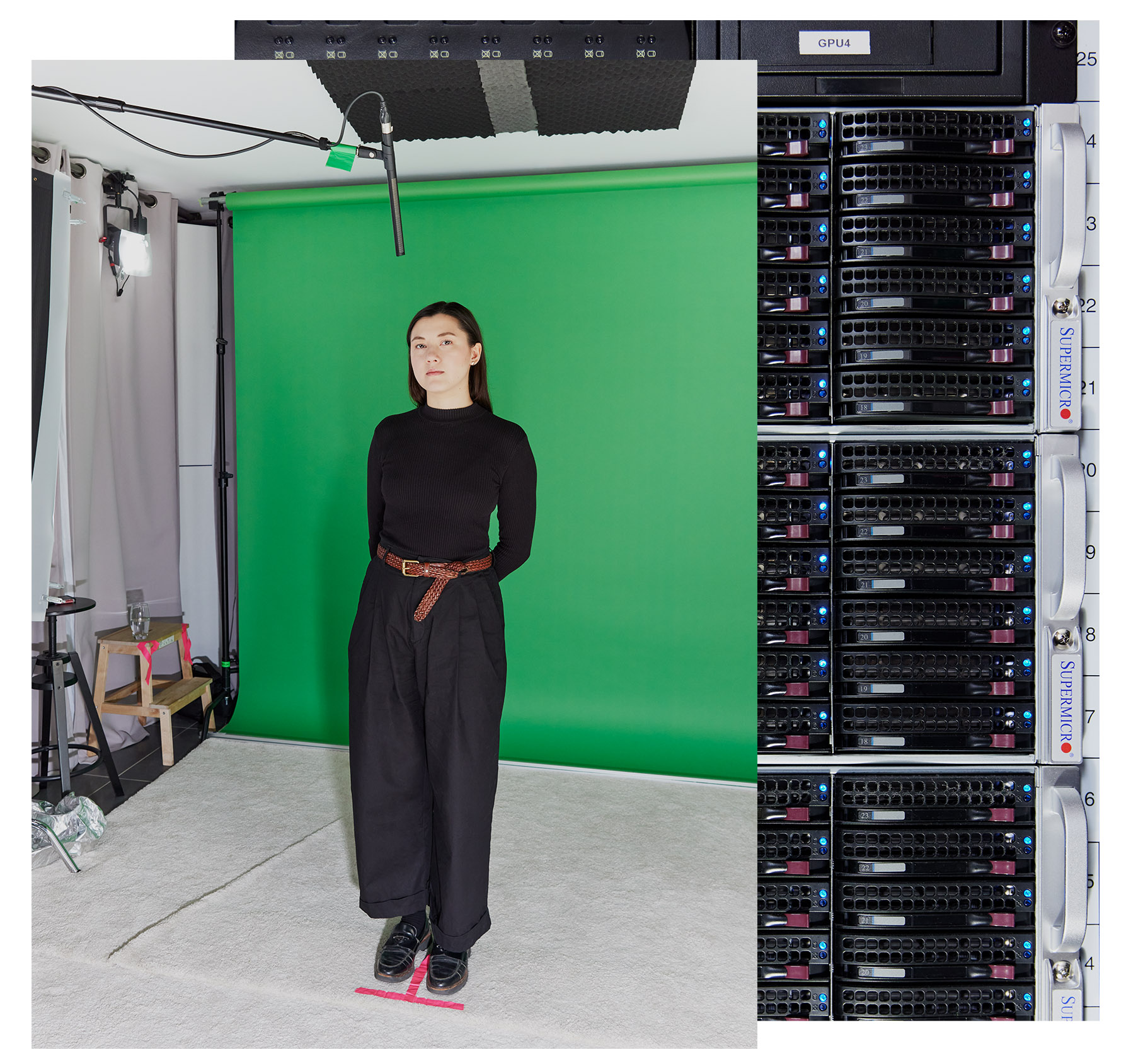

Once I lastly arrive on the firm’s trendy studio in East London, I’m greeted by Tosin Oshinyemi, the corporate’s manufacturing lead. He’s going to information and direct me by the info assortment course of—and by “information assortment,” I imply the seize of my facial options, mannerisms, and extra—very like he usually does for actors and Synthesia’s prospects.

He introduces me to a ready stylist and a make-up artist, and I curse myself for losing a lot time preparing. Their job is to make sure that folks have the sort of garments that look good on digital camera and that they appear constant from one shot to the subsequent. The stylist tells me my outfit is tremendous (phew), and the make-up artist touches up my face and tidies my child hairs. The dressing room is adorned with tons of of smiling Polaroids of people that have been digitally cloned earlier than me.

Other than the small supercomputer whirring within the hall, which processes the info generated on the studio, this feels extra like going right into a information studio than getting into a deepfake manufacturing unit.

I joke that Oshinyemi has what MIT Expertise Evaluate would possibly name a job title of the longer term: “deepfake creation director.”

“We just like the time period ‘artificial media’ versus ‘deepfake,’” he says.

It’s a delicate however, some would argue, notable distinction in semantics. Each imply AI-generated movies or audio recordings of individuals doing or saying one thing that didn’t essentially occur in actual life. However deepfakes have a foul fame. Since their inception practically a decade in the past, the time period has come to sign one thing unethical, says Alexandru Voica, Synthesia’s head of company affairs and coverage. Consider sexual content material produced with out consent, or political campaigns that unfold disinformation or propaganda.

“Artificial media is the extra benign, productive model of that,” he argues. And Synthesia desires to supply the most effective model of that model.

Till now, all AI-generated movies of individuals have tended to have some stiffness, glitchiness, or different unnatural components that make them fairly straightforward to distinguish from actuality. As a result of they’re so near the true factor however not fairly it, these movies could make folks really feel aggravated or uneasy or icky—a phenomenon generally often known as the uncanny valley. Synthesia claims its new know-how will lastly lead us out of the valley.

Due to fast developments in generative AI and a glut of coaching information created by human actors that has been fed into its AI mannequin, Synthesia has been capable of produce avatars which are certainly extra humanlike and extra expressive than their predecessors. The digital clones are higher capable of match their reactions and intonation to the sentiment of their scripts—appearing extra upbeat when speaking about pleased issues, as an example, and extra critical or unhappy when speaking about disagreeable issues. Additionally they do a greater job matching facial expressions—the tiny actions that may converse for us with out phrases.

However this technological progress additionally indicators a a lot bigger social and cultural shift. More and more, a lot of what we see on our screens is generated (or no less than tinkered with) by AI, and it’s turning into increasingly more tough to tell apart what’s actual from what just isn’t. This threatens our belief in all the pieces we see, which might have very actual, very harmful penalties.

“I feel we would simply should say goodbye to discovering out concerning the reality in a fast approach,” says Sandra Wachter, a professor on the Oxford Web Institute, who researches the authorized and moral implications of AI. “The concept that you could simply shortly Google one thing and know what’s truth and what’s fiction—I don’t suppose it really works like that anymore.”

So whereas I used to be excited for Synthesia to make my digital double, I additionally questioned if the excellence between artificial media and deepfakes is essentially meaningless. Even when the previous facilities a creator’s intent and, critically, a topic’s consent, is there actually a approach to make AI avatars safely if the tip consequence is similar? And do we actually wish to get out of the uncanny valley if it means we will now not grasp the reality?

However extra urgently, it was time to search out out what it’s wish to see a post-truth model of your self.

Nearly the true factor

A month earlier than my journey to the studio, I visited Synthesia CEO Victor Riparbelli at his workplace close to Oxford Circus. As Riparbelli tells it, Synthesia’s origin story stems from his experiences exploring avant-garde, geeky techno music whereas rising up in Denmark. The web allowed him to obtain software program and produce his personal songs with out shopping for costly synthesizers.

“I’m an enormous believer in giving folks the flexibility to precise themselves in the best way that they will, as a result of I feel that that gives for a extra meritocratic world,” he tells me.

He noticed the potential of doing one thing related with video when he got here throughout analysis on utilizing deep studying to switch expressions from one human face to a different on display.

“What that showcased was the primary time a deep-learning community might produce video frames that regarded and felt actual,” he says.

That analysis was carried out by Matthias Niessner, a professor on the Technical College of Munich, who cofounded Synthesia with Riparbelli in 2017, alongside College Faculty London professor Lourdes Agapito and Steffen Tjerrild, whom Riparbelli had beforehand labored with on a cryptocurrency undertaking.

Initially the corporate constructed lip-synching and dubbing instruments for the leisure trade, nevertheless it discovered that the bar for this know-how’s high quality was very excessive and there wasn’t a lot demand for it. Synthesia modified route in 2020 and launched its first era of AI avatars for company shoppers. That pivot paid off. In 2023, Synthesia achieved unicorn standing, which means it was valued at over $1 billion—making it one of many comparatively few European AI firms to take action.

That first era of avatars regarded clunky, with looped actions and little variation. Subsequent iterations began wanting extra human, however they nonetheless struggled to say difficult phrases, and issues had been barely out of sync.

The problem is that individuals are used to different folks’s faces. “We as people know what actual people do,” says Jonathan Starck, Synthesia’s CTO. Since infancy, “you’re actually tuned in to folks and faces. You already know what’s proper, so something that’s not fairly proper actually jumps out a mile.”

These earlier AI-generated movies, like deepfakes extra broadly, had been made utilizing generative adversarial networks, or GANs—an older approach for producing photos and movies that makes use of two neural networks that play off each other. It was a laborious and complex course of, and the know-how was unstable.

However within the generative AI increase of the final yr or so, the corporate has discovered it will possibly create significantly better avatars utilizing generative neural networks that produce greater high quality extra constantly. The extra information these fashions are fed, the higher they be taught. Synthesia makes use of each massive language fashions and diffusion fashions to do that; the previous assist the avatars react to the script, and the latter generate the pixels.

Regardless of the leap in high quality, the corporate continues to be not pitching itself to the leisure trade. Synthesia continues to see itself as a platform for companies. Its wager is that this: As folks spend extra time watching movies on YouTube and TikTok, there will probably be extra demand for video content material. Younger individuals are already skipping conventional search and defaulting to TikTok for data offered in video kind. Riparbelli argues that Synthesia’s tech might assist firms convert their boring company comms and reviews and coaching supplies into content material folks will truly watch and have interaction with. He additionally suggests it could possibly be used to make advertising supplies.

He claims Synthesia’s know-how is utilized by 56% of the Fortune 100, with the overwhelming majority of these firms utilizing it for inside communication. The corporate lists Zoom, Xerox, Microsoft, and Reuters as shoppers. Companies begin at $22 a month.

This, the corporate hopes, will probably be a less expensive and extra environment friendly various to video from an expert manufacturing firm—and one which may be practically indistinguishable from it. Riparbelli tells me its latest avatars might simply idiot an individual into pondering they’re actual.

“I feel we’re 98% there,” he says.

For higher or worse, I’m about to see it for myself.

Don’t be rubbish

In AI analysis, there’s a saying: Rubbish in, rubbish out. If the info that went into coaching an AI mannequin is trash, that will probably be mirrored within the outputs of the mannequin. The extra information factors the AI mannequin has captured of my facial actions, microexpressions, head tilts, blinks, shrugs, and hand waves, the extra lifelike the avatar will probably be.

Again within the studio, I’m making an attempt actually laborious to not be rubbish.

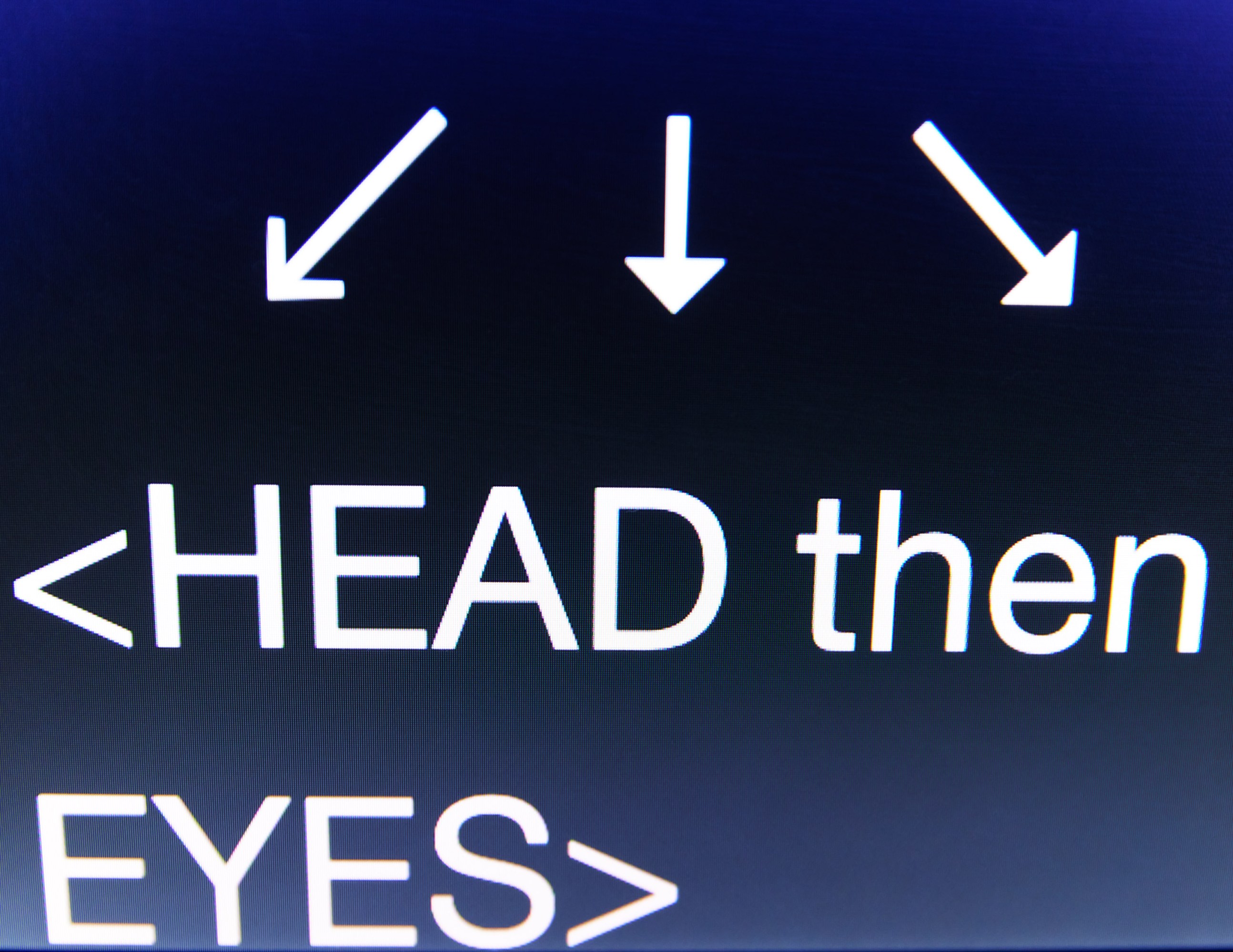

I’m standing in entrance of a inexperienced display, and Oshinyemi guides me by the preliminary calibration course of, the place I’ve to maneuver my head after which eyes in a round movement. Apparently, this may enable the system to know my pure colours and facial options. I’m then requested to say the sentence “All of the boys ate a fish,” which is able to seize all of the mouth actions wanted to kind vowels and consonants. We additionally movie footage of me “idling” in silence.

He then asks me to learn a script for a fictitious YouTuber in several tones, directing me on the spectrum of feelings I ought to convey. First I’m imagined to learn it in a impartial, informative approach, then in an encouraging approach, an aggravated and complain-y approach, and eventually an excited, convincing approach.

“Hey, everybody—welcome again to Elevate Her together with your host, Jess Mars. It’s nice to have you ever right here. We’re about to tackle a subject that’s fairly delicate and truthfully hits near house—coping with criticism in our non secular journey,” I learn off the teleprompter, concurrently making an attempt to visualise ranting about one thing to my accomplice throughout the complain-y model. “Irrespective of the place you look, it appears like there’s at all times a crucial voice able to chime in, doesn’t it?”

Don’t be rubbish, don’t be rubbish, don’t be rubbish.

“That was actually good. I used to be watching it and I used to be like, ‘Properly, that is true. She’s undoubtedly complaining,’” Oshinyemi says, encouragingly. Subsequent time, possibly add some judgment, he suggests.

We movie a number of takes that includes totally different variations of the script. In some variations I’m allowed to maneuver my fingers round. In others, Oshinyemi asks me to carry a metallic pin between my fingers as I do. That is to check the “edges” of the know-how’s capabilities with regards to speaking with fingers, Oshinyemi says.

Traditionally, making AI avatars look pure and matching mouth actions to speech has been a really tough problem, says David Barber, a professor of machine studying at College Faculty London who just isn’t concerned in Synthesia’s work. That’s as a result of the issue goes far past mouth actions; you must take into consideration eyebrows, all of the muscle groups within the face, shoulder shrugs, and the quite a few totally different small actions that people use to precise themselves.

Synthesia has labored with actors to coach its fashions since 2020, and their doubles make up the 225 inventory avatars which are out there for purchasers to animate with their very own scripts. However to coach its newest era of avatars, Synthesia wanted extra information; it has spent the previous yr working with round 1,000 skilled actors in London and New York. (Synthesia says it doesn’t promote the info it collects, though it does launch a few of it for educational analysis functions.)

The actors beforehand acquired paid every time their avatar was used, however now the corporate pays them an up-front payment to coach the AI mannequin. Synthesia makes use of their avatars for 3 years, at which level actors are requested in the event that they wish to renew their contracts. In that case, they arrive into the studio to make a brand new avatar. If not, the corporate will delete their information. Synthesia’s enterprise prospects may also generate their very own customized avatars by sending somebody into the studio to do a lot of what I’m doing.

Between takes, the make-up artist is available in and does some touch-ups to ensure I look the identical in each shot. I can really feel myself blushing due to the lights within the studio, but in addition due to the appearing. After the crew has collected all of the pictures it must seize my facial expressions, I’m going downstairs to learn extra textual content aloud for voice samples.

This course of requires me to learn a passage indicating that I explicitly consent to having my voice cloned, and that it may be used on Voica’s account on the Synthesia platform to generate movies and speech.

Consent is essential

This course of may be very totally different from the best way many AI avatars, deepfakes, or artificial media—no matter you wish to name them—are created.

Most deepfakes aren’t created in a studio. Research have proven that the overwhelming majority of deepfakes on-line are nonconsensual sexual content material, normally utilizing photos stolen from social media. Generative AI has made the creation of those deepfakes straightforward and low cost, and there have been a number of high-profile circumstances within the US and Europe of youngsters and ladies being abused on this approach. Specialists have additionally raised alarms that the know-how can be utilized to unfold political disinformation, a very acute menace given the report variety of elections taking place all over the world this yr.

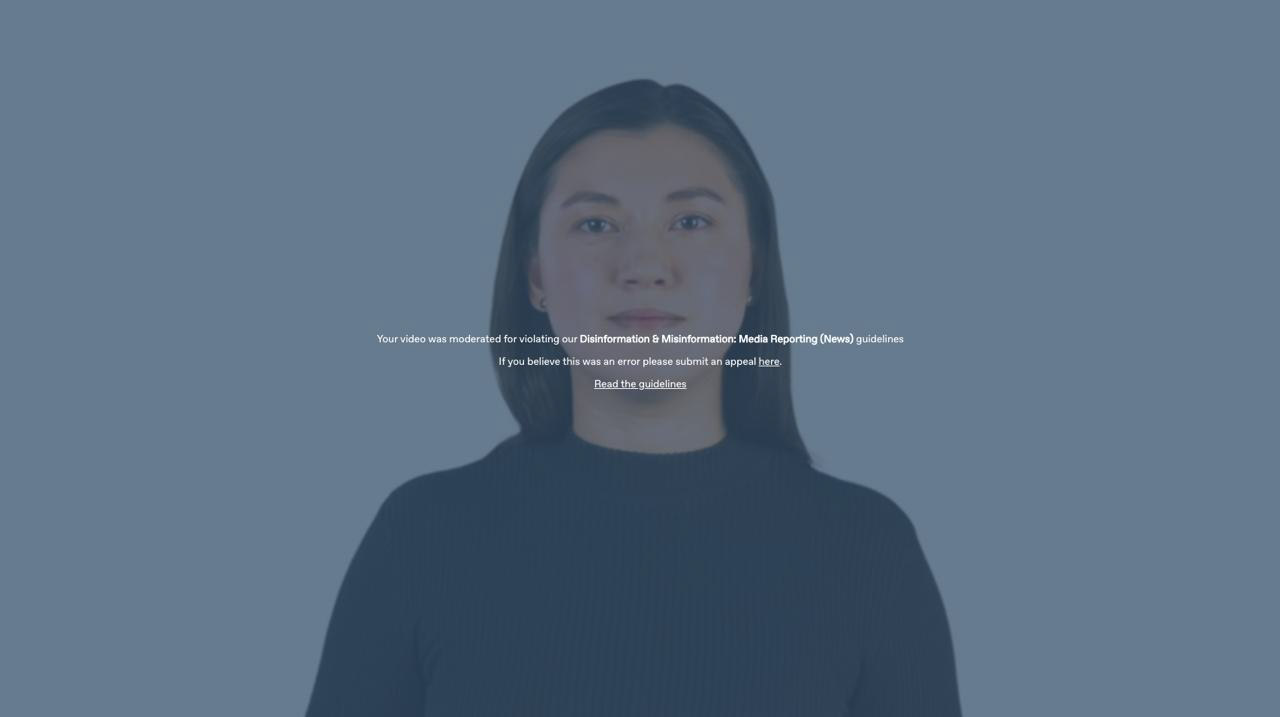

Synthesia’s coverage is to not create avatars of individuals with out their specific consent. Nevertheless it hasn’t been immune from abuse. Final yr, researchers discovered pro-China misinformation that was created utilizing Synthesia’s avatars and packaged as information, which the corporate mentioned violated its phrases of service.

Since then, the corporate has put extra rigorous verification and content material moderation programs in place. It applies a watermark with data on the place and the way the AI avatar movies had been created. The place it as soon as had 4 in-house content material moderators, folks doing this work now make up 10% of its 300-person workers. It additionally employed an engineer to construct higher AI-powered content material moderation programs. These filters assist Synthesia vet each single factor its prospects attempt to generate. Something suspicious or ambiguous, akin to content material about cryptocurrencies or sexual well being, will get forwarded to the human content material moderators. Synthesia additionally retains a report of all of the movies its system creates.

And whereas anybody can be part of the platform, many options aren’t out there till folks undergo an intensive vetting system much like that utilized by the banking trade, which incorporates speaking to the gross sales crew, signing authorized contracts, and submitting to safety auditing, says Voica. Entry-level prospects are restricted to producing strictly factual content material, and solely enterprise prospects utilizing customized avatars can generate content material that incorporates opinions. On prime of this, solely accredited information organizations are allowed to create content material on present affairs.

“We will’t declare to be excellent. If folks report issues to us, we take fast motion, [such as] banning or limiting people or organizations,” Voica says. However he believes these measures work as a deterrent, which suggests most dangerous actors will flip to freely out there open-source instruments as a substitute.

I put a few of these limits to the take a look at once I head to Synthesia’s workplace for the subsequent step in my avatar era course of. In an effort to create the movies that can characteristic my avatar, I’ve to write down a script. Utilizing Voica’s account, I determine to make use of passages from Hamlet, in addition to earlier articles I’ve written. I additionally use a brand new characteristic on the Synthesia platform, which is an AI assistant that transforms any internet hyperlink or doc right into a ready-made script. I attempt to get my avatar to learn information concerning the European Union’s new sanctions towards Iran.

Voica instantly texts me: “You bought me in bother!”

The system has flagged his account for making an attempt to generate content material that’s restricted.

Providing companies with out these restrictions can be “an amazing progress technique,” Riparbelli grumbles. However “finally, we’ve got very strict guidelines on what you’ll be able to create and what you can not create. We expect the appropriate approach to roll out these applied sciences in society is to be a little bit bit over-restrictive in the beginning.”

Nonetheless, even when these guardrails operated completely, the final word consequence would nonetheless be an web the place all the pieces is faux. And my experiment makes me surprise how we might presumably put together ourselves.

Our data panorama already feels very murky. On the one hand, there’s heightened public consciousness that AI-generated content material is flourishing and could possibly be a robust software for misinformation. However on the opposite, it’s nonetheless unclear whether or not deepfakes are used for misinformation at scale and whether or not they’re broadly transferring the needle to alter folks’s beliefs and behaviors.

If folks grow to be too skeptical concerning the content material they see, they could cease believing in something in any respect, which might allow dangerous actors to benefit from this belief vacuum and lie concerning the authenticity of actual content material. Researchers have referred to as this the “liar’s dividend.” They warn that politicians, for instance, might declare that genuinely incriminating data was faux or created utilizing AI.

Claire Leibowicz, the pinnacle of the AI and media integrity on the nonprofit Partnership on AI, says she worries that rising consciousness of this hole will make it simpler to “plausibly deny and forged doubt on actual materials or media as proof in many alternative contexts, not solely within the information, [but] additionally within the courts, within the monetary companies trade, and in lots of our establishments.” She tells me she’s heartened by the assets Synthesia has dedicated to content material moderation and consent however says that course of is rarely flawless.

Even Riparbelli admits that within the quick time period, the proliferation of AI-generated content material will most likely trigger bother. Whereas folks have been skilled to not consider all the pieces they learn, they nonetheless are inclined to belief photos and movies, he provides. He says folks now want to check AI merchandise for themselves to see what is feasible, and shouldn’t belief something they see on-line except they’ve verified it.

By no means thoughts that AI regulation continues to be patchy, and the tech sector’s efforts to confirm content material provenance are nonetheless of their early levels. Can shoppers, with their various levels of media literacy, actually battle the rising wave of dangerous AI-generated content material by particular person motion?

Be careful, PowerPoint

The day after my ultimate go to, Voica emails me the movies with my avatar. When the primary one begins enjoying, I’m bowled over. It’s as painful as seeing your self on digital camera or listening to a recording of your voice. Then I catch myself. At first I assumed the avatar was me.

The extra I watch movies of “myself,” the extra I spiral. Do I actually squint that a lot? Blink that a lot? And transfer my jaw like that? Jesus.

It’s good. It’s actually good. Nevertheless it’s not excellent. “Weirdly good animation,” my accomplice texts me.

“However the voice generally sounds precisely such as you, and at different occasions like a generic American and with a bizarre tone,” he provides. “Bizarre AF.”

He’s proper. The voice is usually me, however in actual life I umm and ahh extra. What’s outstanding is that it picked up on an irregularity in the best way I discuss. My accent is a transatlantic mess, confused by years spent dwelling within the UK, watching American TV, and attending worldwide college. My avatar generally says the phrase “robotic” in a British accent and different occasions in an American accent. It’s one thing that most likely no one else would discover. However the AI did.

My avatar’s vary of feelings can also be restricted. It delivers Shakespeare’s “To be or to not be” speech very matter-of-factly. I had guided it to be livid when studying a narrative I wrote about Taylor Swift’s nonconsensual nude deepfakes; the avatar is complain-y and judgy, for certain, however not offended.

This isn’t the primary time I’ve made myself a take a look at topic for brand spanking new AI. Not too way back, I attempted producing AI avatar photos of myself, solely to get a bunch of nudes. That have was a jarring instance of simply how biased AI programs might be. However this expertise—and this explicit approach of being immortalized—was undoubtedly on a special stage.

Carl Öhman, an assistant professor at Uppsala College who has studied digital stays and is the creator of a brand new guide, The Afterlife of Information, calls avatars like those I made “digital corpses.”

“It seems to be precisely such as you, however nobody’s house,” he says. “It will be the equal of cloning you, however your clone is useless. And then you definately’re animating the corpse, in order that it strikes and talks, with electrical impulses.”

That’s sort of the way it feels. The little, nuanced methods I don’t acknowledge myself are sufficient to place me off. Then once more, the avatar might fairly presumably idiot anybody who doesn’t know me very properly. It actually shines when presenting a narrative I wrote about how the sphere of robotics could possibly be getting its personal ChatGPT second; the digital AI assistant summarizes the lengthy learn into a good quick video, which my avatar narrates. It’s not Shakespeare, nevertheless it’s higher than lots of the company displays I’ve needed to sit by. I feel if I had been utilizing this to ship an end-of-year report back to my colleagues, possibly that stage of authenticity can be sufficient.

And that is the promote, in line with Riparbelli: “What we’re doing is extra like PowerPoint than it’s like Hollywood.”

The most recent era of avatars actually aren’t prepared for the silver display. They’re nonetheless caught in portrait mode, solely exhibiting the avatar front-facing and from the waist up. However within the not-too-distant future, Riparbelli says, the corporate hopes to create avatars that may talk with their fingers and have conversations with each other. It’s also planning for full-body avatars that may stroll and transfer round in an area that an individual has generated. (The rig to allow this know-how already exists; the truth is it’s the place I’m within the picture on the prime of this piece.)

However will we actually need that? It appears like a bleak future the place people are consuming AI-generated content material offered to them by AI-generated avatars and utilizing AI to repackage that into extra content material, which is able to possible be scraped to generate extra AI. If nothing else, this experiment made clear to me that the know-how sector urgently must step up its content material moderation practices and be certain that content material provenance methods akin to watermarking are sturdy.

Even when Synthesia’s know-how and content material moderation aren’t but excellent, they’re considerably higher than something I’ve seen within the subject earlier than, and that is after solely a yr or so of the present increase in generative AI. AI improvement strikes at breakneck pace, and it’s each thrilling and daunting to think about what AI avatars will appear to be in only a few years. Possibly sooner or later we must undertake safewords to point that you’re the truth is speaking with an actual human, not an AI.

However that day just isn’t at present.

I discovered it weirdly comforting that in one of many movies, my avatar rants about nonconsensual deepfakes and says, in a sociopathically pleased voice, “The tech giants? Oh! They’re making a killing!”

I’d by no means.