In 2018, Sam Cole, a reporter at Motherboard, found a brand new and disturbing nook of the web. A Reddit consumer by the title of “deepfakes” was posting nonconsensual faux porn movies utilizing an AI algorithm to swap celebrities’ faces into actual porn. Cole sounded the alarm on the phenomenon, proper because the know-how was about to blow up. A yr later, deepfake porn had unfold far past Reddit, with simply accessible apps that might “strip” garments off any girl photographed.

Since then deepfakes have had a foul rap, and rightly so. The overwhelming majority of them are nonetheless used for faux pornography. A feminine investigative journalist was severely harassed and briefly silenced by such exercise, and extra just lately, a feminine poet and novelist was frightened and shamed. There’s additionally the danger that political deepfakes will generate convincing faux information that might wreak havoc in unstable political environments.

However because the algorithms for manipulating and synthesizing media have grown extra highly effective, they’ve additionally given rise to constructive purposes—in addition to some which are humorous or mundane. Here’s a roundup of a few of our favorites in a tough chronological order, and why we predict they’re an indication of what’s to return.

Whistleblower shielding

In June, Welcome to Chechyna, an investigative movie concerning the persecution of LGBTQ people within the Russian republic, grew to become the primary documentary to make use of deepfakes to guard its topics’ identities. The activists combating the persecution, who served as the primary characters of the story, lived in hiding to keep away from being tortured or killed. After exploring many strategies to hide their identities, director David France settled on giving them deepfake “covers.” He requested different LGBTQ activists from all over the world to lend their faces, which have been then grafted onto the faces of the individuals in his movie. The approach allowed France to protect the integrity of his topics’ facial expressions and thus their ache, worry, and humanity. In whole the movie shielded 23 people, pioneering a brand new type of whistleblower safety.

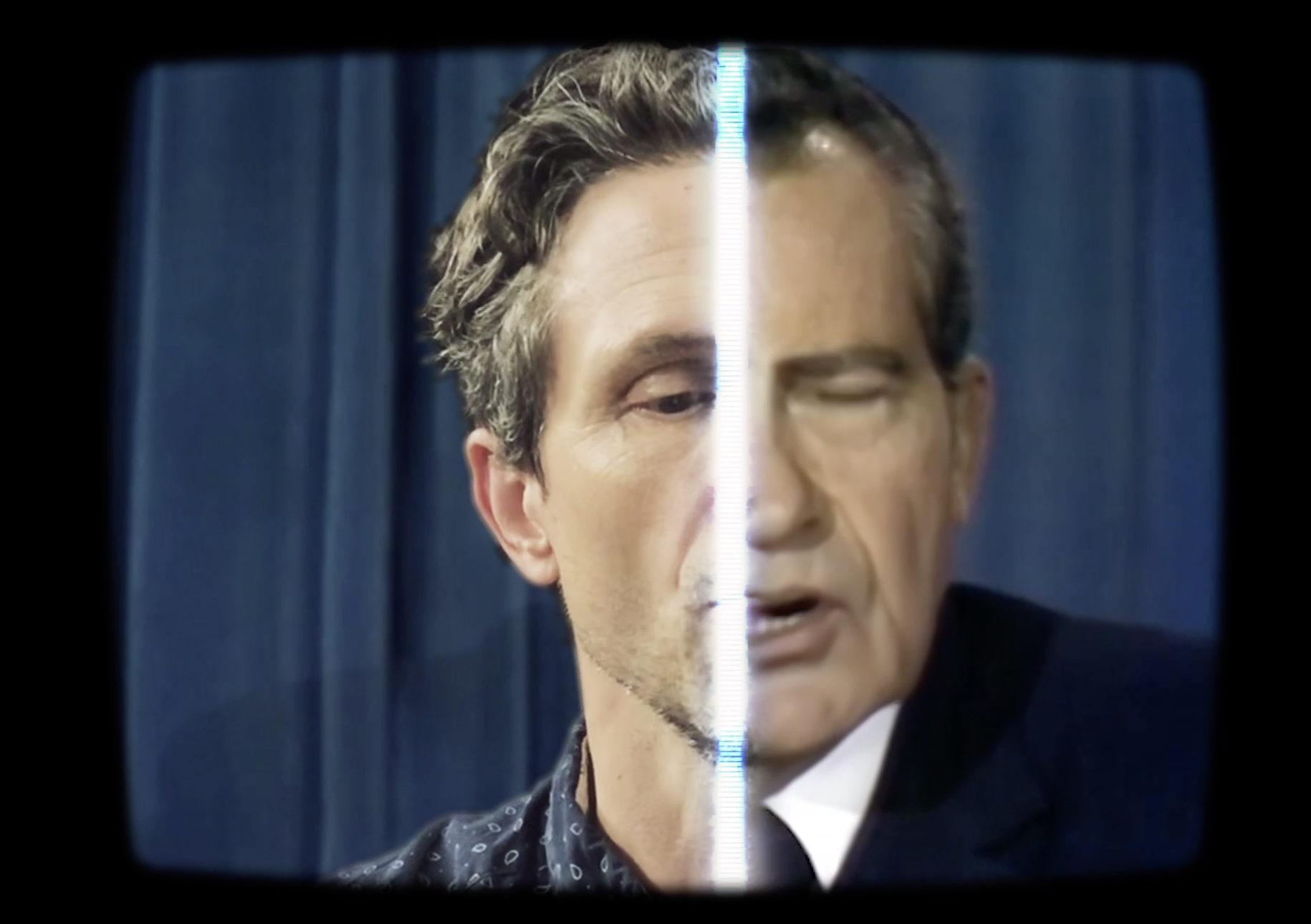

Revisionist historical past

In July, two MIT researchers, Francesca Panetta and Halsey Burgund, launched a undertaking to create another historical past of the 1969 Apollo moon touchdown. Referred to as In Occasion of Moon Catastrophe, it makes use of the speech that President Richard Nixon would have delivered had the momentous event not gone based on plan. The researchers partnered with two separate firms for deepfake audio and video, and employed an actor to supply the “base” efficiency. They then ran his voice and face by way of the 2 kinds of software program, and stitched them collectively right into a closing deepfake Nixon.

Whereas this undertaking demonstrates how deepfakes might create highly effective different histories, one other one hints at how deepfakes might deliver actual historical past to life. In February, Time journal re-created Martin Luther King Jr.’s March on Washington for digital actuality to immerse viewers within the scene. The undertaking didn’t use deepfake know-how, however Chinese language tech large Tencent later cited it in a white paper about its plans for AI, saying deepfakes could possibly be used for related functions sooner or later.

Memes

In late summer season, the memersphere received its arms on simple-to-make deepfakes and unleashed the outcomes into the digital universe. One viral meme particularly, known as “Baka Mitai” (pictured above), rapidly surged as individuals realized to make use of the know-how to create their very own variations. The precise algorithm powering the insanity got here from a 2019 analysis paper that permits a consumer to animate a photograph of 1 particular person’s face with a video of another person’s. The impact isn’t prime quality by any stretch of the creativeness, but it surely certain produces high quality enjoyable. The phenomenon shouldn’t be fully stunning; play and parody have been a driving pressure within the popularization of deepfakes and different media manipulation instruments. It’s why some consultants emphasize the necessity for guardrails to stop satire from blurring into abuse.

Sports activities adverts

Busy schedules make it laborious to get movie star sports activities stars in the identical room at the very best of instances. In the midst of a lockdown, it’s inconceivable. So when it is advisable movie a industrial in LA that includes individuals in quarantine bubbles throughout the nation, the one possibility is to faux it. In August the streaming website Hulu ran an advert to advertise the return of sports activities to its service, starring NBA participant Damian Lillard, WNBA participant Skylar Diggins-Smith, and Canadian hockey participant Sidney Crosby. We see these stars giving up their sourdough baking and returning to their sports activities, wielding basketballs and hockey sticks. Besides we don’t: the faces of these stars have been superimposed onto physique doubles utilizing deepfake tech. The algorithm was educated on footage of the gamers captured over Zoom. Laptop trickery has been used to faux this sort of factor for years, however deepfakes make it simpler and cheaper than ever, and this yr of distant all the things has given the tech a lift. Hulu wasn’t the one one. Different advertisers, together with ESPN, experimented with deepfakes as effectively.

Political campaigns

In September, throughout the lead-up to the US presidential elections, the nonpartisan advocacy group RepresentUs launched a pair of deepfake adverts. They featured faux variations of Russian president Vladimir Putin and North Korean chief Kim Jong-un delivering the identical message: that neither wanted to intervene with US elections, as a result of America would smash its democracy by itself. This wasn’t the primary use of deepfakes throughout a political marketing campaign. In February, Indian politician Manoj Tiwari used deepfakes in a marketing campaign video to make it seem as if he have been talking Haryanvi, the Hindi dialect spoken by his goal voters. However RepresentUs notably flipped the script on the everyday narrative round political deepfakes. Whereas consultants typically fear concerning the know-how’s potential to sow confusion and disrupt elections, the group sought to do the precise reverse: increase consciousness of voter suppression to guard voting rights and improve turnout.

TV exhibits

If deepfake commercials and one-off stunts are beginning to really feel acquainted, belief the makers of South Park to take it to extremes. In October, Trey Parker and Matt Stone debuted their new creation, Sassy Justice, the primary deepfake TV present. The weekly satirical present revolves across the character Sassy Justice, a neighborhood information reporter with a deepfaked Trump face. Sassy interviews deepfaked figures resembling Jared Kushner (with Kushner’s face superimposed on a toddler) and Al Gore. With Sassy Justice, deepfakes have gone past advertising gimmick or malicious deception to hit the cultural mainstream. Not solely is the know-how used to create the characters, however it’s the topic of satire itself. Within the first episode, Sassy “Trump” Justice, taking part in a client advocate, investigates the reality behind “deepfake information.”